The Story Behind AI: How it All Started

Written by Elena Rodriguez - Editor in Chief and King Lucero - Editor in Chief

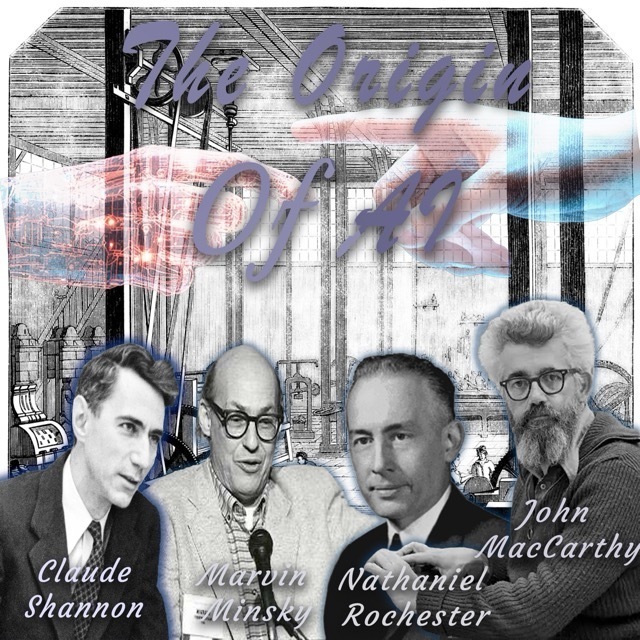

Photo by Zander Menke

AI didn’t suddenly appear overnight. It is a lineage that actually goes all the way back to the mid-20th century; a period when ideas from all sorts of scientific disciplines started colliding in fascinating ways. The quest to create machines with human-like intelligence was set in motion by visionaries convinced that computers could be more than glorified calculators; they believed these machines might one day learn, reason and even problem solve on their own, laying the groundwork for what would become one of the most transformative fields of science and technology.

John McCathy, Marvin Minsky, Nathaniel Rochester, and Cloude Shannon put together a workshop where top minds got together to chat about making machines that could act like human brains; It was here that the term “Artificial Intelligence”was coined, and the agenda for AI research was set.

The field started with Alan Turning’s ideas and grew through hands-on work at the Dartmouth workshop, turning from a mere experiment into a major player in our world early AI research, characterized by symbolic approaches and expert systems, set the stage for the more sophisticated machine learning techniques that dominate the field today.

In his groundbreaking 1950 paper, “Computing Machinery and Intelligence,” Alan Turning developed the idea of making machines that could think, shaking up the old belief that humans are the only ones with this ability. At the same time, the creation of early computers like Electronic Numerical Integrator and Computer and Colossus in the 1940s gave us the technology we needed to make those revolutionary theories work in the real world. These machines, though primitive by today’s standards, demonstrated the potential for automated computation and data processing, fueling the initial enthusiasm for AI research. In 1956, the Dartmouth Workshop and the birth of AI are widely considered the event that officially launched AI as a distinct field of study.

A trend of this time was the creation of expert systems, which are computer programs that mimic the decision-making skills of human experts in certain fields. MYCIN was an early expert system created at Stanford back in the ‘70s, designed to figure out bacterial infections and suggest the right antibiotics. While expert systems demonstrated the potential of AI to solve complex problems, they also revealed the limitations of the symbolic approach, particularly in dealing with uncertainty and real-world complexity.

People put constant effort in ideas of understanding human language, brain inspired computing, and setting the stage for what’s next. The Dartmouth Workshop not only formalized the field but also fostered a sense of optimism and shared purpose among early AI researchers. The symbolic AI and expert systems in the decades following the Dartmouth Workshop, AI research focused primarily on symbolic AI, an approach that sought to represent knowledge as symbols and use logical rules to manipulate those symbols.

As AI keeps pushing forward, the origin of AI is a story of visionary ideas, technological innovation and collaborative effort. Its beginnings stand as a testament to our ongoing drive to grasp, and quest to understand and replicate the human mind.

After the creation of AI, it was used in many different ways. As said in the Article above, Alan Turing published the paper titled “Computing Machinery and Intelligence”. It was a 5 point paper with thoughts and questions about AI. With the initial part asking “can a machine think?”. Then, this made way for the idea of the ”Imitation Game” ; including 3 different people (A-Male,B-Female,C-Random gender) They were then sectioned out and labeled X or Y. Then are asked questions like; hair length and type. The X’s goal is to make Y wrong, Alan applied this information to a machine or in the case an AI. The paper was then published in 1950.

Then, in 1952, an IBM researcher, Arther Samuel, developed a self-learning checkers program. With the IDM 701, it learned how to play better and improve its skill by self-play. It analyzed the board and changed it up based on the human player. Its greatest feat was in 1962 defeating a checkers champion Robert Nealey.

But AI wasn't called AI until 1956. The term was coined by John McCarthy at Dartmouth Summer Research Program. He coined it AI and it was established as an academic discipline. The conference brought together researchers to explore the hypothesis that every aspect of learning or intelligence can be simulated by a machine.

In 1957 Perceptron was made. Frank Rosenblatt, a psychologist at Cornell Aeronautical Laboratory. A foundational artificial neural network. Designed to simulate how the human brain learns and recognizes patterns. He used this to better understand his clients.

These were the first use of AI in the past. So we can better understand how it can benefit us in the present, but also it shapes our future for the better or worse. AI is a tool to be used and not abused by the likes of men. Let us use it for the betterment of mankind, so we can improve our race for the better and our lifestyle.

https://en.wikipedia.org/wiki/History_of_artificial_intelligence